Job orchestration with Airflow: Boost efficiency in data pipelines

Apache Airflow is a standout player in the lineup of data orchestration tools available today. Discover how it can streamline task management and execution.

As the universe of data orchestration tools expands, pinpointing the right tool becomes a unique challenge. Here, we focus on Apache Airflow: its features and use cases and how it can streamline your workflows.

What is Apache Airflow?

Apache Airflow is more than just a workflow management system — it’s a platform tailored to meet the evolving needs of data engineers and developers across the globe. Having emerged from Airbnb’s innovative labs, Apache Airflow quickly positioned itself as an indispensable tool in data orchestration, thanks mainly to its transparent, open-source nature.

One of Airflow’s most notable features is its user-centric design. The platform boasts an intuitive user interface, making it easier for users to visualize, monitor and manage complex data workflows. This user-friendliness, combined with its potent capability to design intricate data pipelines, makes it a preferred choice for many in the industry.

At the heart of Apache Airflow’s functionality lie directed acyclic graphs, commonly known as DAGs. These are pivotal to how Airflow operates. They meticulously chart out tasks to detail a sequence and interdependencies.

The significance of DAGs is undeniable. They determine the order of tasks and ensure that data moves seamlessly, following the structure and path you’ve outlined. This guarantees the precise execution of Apache workflows. Airflow’s tight integration with Python means users can tap into a vast ecosystem of libraries and tools, offering unparalleled flexibility in workflow creation and modification. Whether you’re dealing with simple tasks or grappling with the intricacies of large-scale data operations, Apache Airflow stands ready to streamline and enhance your data orchestration efforts.

Why choose Apache Airflow for job orchestration?

Airflow brings numerous advantages to the table, including:

- Extensibility: Crafted for easy extensions, Airflow integrates seamlessly with platforms from AWS to Google Cloud and Kubernetes. Plus, adding new integrations is hassle-free with its REST API.

- Open-source flexibility: Airflow’s vast community of data engineers and developers tirelessly innovate, helping you remain on the cutting edge.

- Scalability: Whether managing a small dataset or taming a mammoth of data, Airflow ensures performance never falters.

- Templating: Airflow also has advanced features for dynamically generating task parameters and paths.

Airflow architecture and big data

Airflow’s architecture supports the complexities of big data environments because it’s built for large-scale data processing tasks.

DAGs allow you to specify dependencies and sequences that go beyond simple, linear progressions, and Airflow’s web server centralizes monitoring, making it ideal for managing high volume and variety.

Organizations dealing with big data often have multiple data sources, compute environments and storage systems, such as Snowflake and Microsoft Azure, so their orchestration platform must have scalable architecture. Airflow is capable of leveraging sophisticated data science applications, powerful data analytics tools and more for reliable workflow creation and modification.

Using Apache Airflow

When you start using Apache Airflow, you’ll primarily interact with Airflow DAGs. This is where the power of Python shines. Developers can leverage Python code to write custom operators and hooks. You can define individual tasks and specify the relationships and dependencies between them. This granular control allows you to create tailored data pipelines that meet your specific needs.

Two key components that aid you in this journey are the Airflow scheduler and Airflow UI. The scheduler initiates the tasks based on time or external triggers. It ensures your jobs run as they’re supposed to, adhering to the schedules and dependencies you’ve outlined.

The Airflow UI provides a visual dashboard to track your workflow’s progress. It offers detailed logging, alerting mechanisms and various other utilities that keep you informed and facilitate quick debugging and modification. The UI is a valuable tool for managing and monitoring the operational aspects of your data pipelines.

Let’s consider a practical application. Suppose you’re tasked with collecting data from multiple sources, like a data lake or a data warehouse, for real-time analytics. Once gathered, this data must be processed and sent to different visualization tools. Airflow can automate this entire workflow, from data gathering and processing to final delivery.

For those just learning to use Airflow, there are numerous tutorials available on GitHub and other platforms.

Airflow alternatives

Airflow is not the only option in the market for data orchestration and workflow management. Jenkins, DBT and several other tools have carved out their niches.

You may wonder: “Can Airflow replace Jenkins?” or “How does Airflow differ from DBT?” Instead of getting swayed by popular opinion, evaluate these tools based on your specific requirements.

If ETL processes are a significant part of your operations, you’ll be pleased to know that Airflow excels in this domain. It provides a flexible and powerful framework to manage and automate your ETL processes, ensuring data is processed efficiently.

However, you may need a more holistic orchestration solution.

How ActiveBatch augments your Airflow experience

Apache Airflow is a leading choice in job orchestration, enhancing workflows with precision and efficiency. By complementing it with robust solutions like ActiveBatch, you can truly achieve unparalleled orchestration sophistication.

As the easiest-to-use workload automation platform in the marketplace, ActiveBatch extends the power of your IT team and reliably runs your business processes, going beyond just job orchestration. It provides true, full-fledged workflow orchestration and seamlessly integrates with platforms like Azure.

If you’re searching for an enterprise-grade solution that provides scalability and reliability, ActiveBatch might be just what you’re looking for.

Book a demo today to preview how it can optimize your job orchestration.

Airflow orchestration FAQs

What is Airflow orchestration?

Airflow orchestration leverages Apache Airflow for crafting, scheduling and supervising workflows through directed acyclic graphs (DAGs), which represent task sequences and dependencies. DAGs ensure tasks run in the correct order and that rules are in place in the event of a failure. With Airflow, complex data workflows are easily manageable from a central platform that increases the efficiency, scalability and reliability of data pipelines.

What is Airflow used for?

Apache Airflow is used to design, schedule, and monitor workflows programmatically. It excels in orchestrating complex data pipelines, automating tasks, data processing and machine learning model training. Airflow is flexible, connecting with various data sources, storage systems and cloud services to handle both simple and intricate workflows.

Is Airflow an ETL?

Apache Airflow is an excellent choice for extract, transform and load (ETL) processes. With its DAGs and the power of Python, you can design intricate ETL workflows that efficiently extract data from sources, transform it as needed and then load it into target systems. Its extensibility means you can integrate with various data sources and platforms to make your ETL process more dynamic and adaptive.

What is the difference between Jenkins and Airflow?

While both shine in the orchestration arena, Apache Airflow excels in data workflows, while Jenkins champions continuous integration and continuous delivery (CI/CD). Airflow orchestrates complex data workflows and is best for data-centric tasks. Jenkins manages software development workflows.

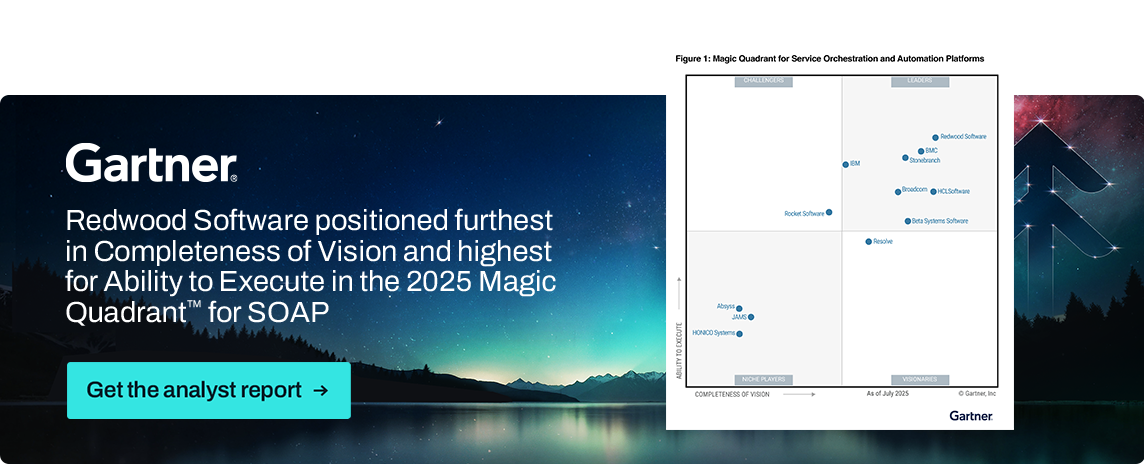

GARTNER is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally, and MAGIC QUADRANT is a registered trademark of Gartner, Inc. and/or its affiliates and are used herein with permission. All rights reserved.

These graphics were published by Gartner, Inc. as part of a larger research document and should be evaluated in the context of the entire document. The Gartner document is available upon request from https://www.advsyscon.com/resource/gartner-mq/.