The evolution of ETL in the age of automated data management

Data management has evolved from a time-consuming process to a fully automated one. Particularly for ETL processes, workload automation offers a reliable, efficient solution

Since the dawn of computing, data management has been a key element in optimizing business operations. In the last 50 to 60 years, data has evolved considerably. Once managed with primitive and inefficient practices, it was a low priority for most businesses.

The complexity and ubiquity of data today mandate specific strategies and advanced technologies.

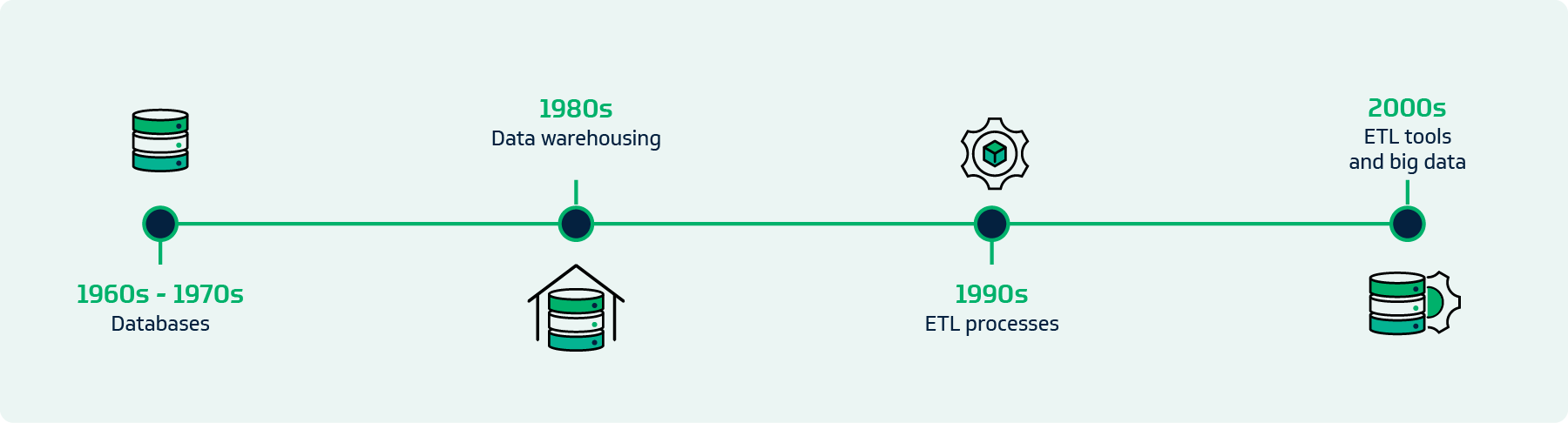

A brief history of data management practices

When computers became widespread, businesses stored data in file-based systems. They couldn’t handle large volumes of data — and they didn’t need to.

The 1960s and ‘70s saw the advent of databases. Edgar F. Codd’s introduction of relational databases revolutionized how data was stored, managed and retrieved. They laid the groundwork for more advanced data management practices.

In the 1980s, the concept of data warehousing emerged. Now, IT teams and leaders could rely on a centralized repository to consolidate data. By the 1990s, growing amounts of data created a need for extract, transform and load (ETL) processes. More and more businesses found it essential to move data across disparate sources, transform it into a consistent format and load it into data warehouses.

The evolution of ETL

ETL practices started out as labor-intensive. Data engineers had to write custom scripts, which meant they often committed errors and had data quality issues. The manual approach was time-consuming and led to bottlenecks in data management workflows.

The emergence of ETL tools such as Informatica, Talend and Microsoft SSIS in the late 1990s and early 2000s — a major milestone in the world of data management. Now, there were solutions for data integration, which reduced the need for custom scripting and manual intervention. However, there was still a lot of human oversight required for configuring, scheduling and monitoring ETL processes.

The rise of big data and cloud computing

The early 2000s brought about an explosion of big data, fueled by the advent of social media, Internet of Things (IoT) devices and the proliferation of online transactions. Organizations began generating and collecting vast amounts of data, which presented new challenges to traditional ETL processes. The sheer volume and velocity of data required more robust and scalable solutions.

Cloud-based data warehousing solutions such as Amazon Redshift, Google BigQuery and Snowflake emerged to address these challenges. They offered significant processing power and scalable storage. Despite these improvements, handling ETL processes in increasingly complex environments was still cumbersome.

Automation: The future of ETL is now

ETL automation encompasses the entire data pipeline — transforming how you ingest, process and load data into target systems. Here’s how automation drives efficiency in each of the three stages of ETL:

- Data extraction: This is no longer a manual task. Advanced ETL solutions use connectors to grab data from different source systems like CRMs, databases (SQL and NoSQL), SaaS applications and even unstructured data sources like social media feeds and IoT devices.

- Data transformation: Once they extract raw data, ETL tools apply complex transformations to cleanse, deduplicate and reformat it. This stage may involve converting data types and applying business rules so the result is a cohesive dataset.

- Data loading: Automated ETL tools load data into a target database, data warehouse or data lake without disruption by scheduling during off-peak hours or using incremental loads.

Artificial intelligence (AI), machine learning (ML) and robotic process automation (RPA) technologies have added even more power and reliability across the entire ETL workflow. Algorithms enhance data quality and can apply corrections automatically.

With automated ETL, you can also perform hands-off data validation and quality checks to rest assured that your data processing is accurate. Reduced time and effort means less risk of human error and, therefore, more consistent data outcomes.

Use cases of automated ETL

The benefits of automated ETL processes are vast. Today’s IT automation technology drives tangibly better business outcomes for a variety of industries and use cases.

Utilities

ETL automation is pivotal for managing data generated by smart meters and sensors in the utilities sector. Providers rely on automated tools to aggregate and analyze data in real time so they can derive insights into energy consumption patterns and detect anomalies or faults in the grid. This improved accuracy helps streamline operations and improve customer service.

Manufacturing

In manufacturing, ETL processes are integral for pulling data from sources like production machines, supply chain systems and ERP systems. With tools that automatically integrate this data, manufacturers can optimize production schedules and reduce delivery times. For example, a car manufacturer can use automated ETL to monitor the performance of assembly line robots and predict maintenance needs and downtime.

Healthcare

The healthcare sector has seen radical improvements as a result of automating ETL. Providers can now integrate data from electronic health record systems (EHRs), medical devices and scheduling platforms, among others, to get a comprehensive view of a patient’s health. A hospital may consolidate patient data from different departments and perform predictive analysis to identify high-risk patients and drive more proactive care.

Ready to implement ETL automation?

With a range of ETL solutions on the market, how do you know which one is right for your business needs? Think about these top five components as you vet platforms.

- Data quality and validation: Robust data validation and data cleansing mechanisms are non-negotiable. You need to be able to automate checks for data integrity, consistency and accuracy from extracting to loading.

- Integration: You’ll need to be able to integrate your ETL solution with a wide range of data sources and target systems, both on-premises and cloud-based. The most appealing platform will have endless extensibility, via built-in integrations and APIs and compatibility with your must-have tools.

- Monitoring and reporting: Alongside ETL processes, data reporting has come a long way. You no longer need to rely on historical data. Instead, choose a tool that enables you to react to changing market conditions with real-time information and modern data visualization features.

- Real-time data processing: To generate the insights you need, your data flows have to be up to date and uninterrupted. Automation has made real-time ETL processes possible.

- Scalability: With the exponential growth of data comes a need for your technology to keep up for the indefinite future. The best automated ETL tools leverage cloud computing to give you scalable storage and processing power.

Because it offers all of the above, advanced workload automation (WLA) can enhance your data management practices at a scale far beyond what’s possible with limited ETL automation solutions.

What’s your data trying to say?

Data always tells a story, but you won’t be able to discern its core message if your data management practices are obsolete. Stop struggling to move and consolidate your data and take advantage of this advanced stage of ETL evolution.

The easiest-to-use WLA platform, ActiveBatch by Redwood, offers extensive connectivity, dashboards and advanced scheduling to make managing ETL processes straightforward. Give your team the gifts of efficiency and informed decision-making: Book a demo.